One of the Millions of Learning Paths to Deep Learning for Programmers

Are you someone with academic or professional programming experience, and trying to get your head around this deep learning stuff, but getting lost in the midst of countless books, tutorials, tools available on the internet?

I can help you with that.

You will surely need a concrete understanding of many math topics before you can call yourself a deep learning expert. But if you know some matrix operations, differential calculus, curve fitting, you can hit the road. There will be bumps as you go, you will take a break, go back to your high school math books, find the intuition, and resume the journey. Life is too short to complete all prerequisites beforehand!

Probably the most important barrier to start the journey is deciding our tools — Language, Framework, IDE, etc. Experts are often unwilling to help with that— for one reason, they are afraid to be criticised by advocates of competitors. Glad that I am not an expert.

But I am taking that risk anyway, because, if you are a programmer like me, it is very very important to specify all the parameters for us to remain focused. We can easily end up spending hours looking for a productive IDE theme — let alone IDE (and still remain indecisive).

After wasting hundreds of such unproductive hours on those useless searches, I found that it is more important to take ANY of those paths than wasting time searching for the BEST one. I am sharing here the path I took — excluding some detours, which I later found were not very helpful.

Language: Python

After installing a fresh OS, probably the first thing you do is download Chrome. But if your friend asks for your suggestion about browser, you might give a series of lectures describing the pros and cons of Safari, Firefox, Opera, Edge — and just as another item to the list, Chrome. Because proving that you are an expert in all of them is more important than giving a useful answer to your friend.

The same thing happened when I asked experts what language to start with to learn deep learning. “None”, I repeat, “None” of them gave any short answer. I understood how deep they know Matlab, R, Python — but I was still indecisive picking the right tool. However, I started with Python anyway and shortly found that it was the perfect choice for me or, with some exceptions (I don’t know what they are), for anyone on this planet in 2020/2021.

IDE: Jupyer Lab, PyCharm

The worst place to find suggestions on IDE is the internet. Again, there are many pros and cons analyses, leaving you indecisive. Since I spent years on Intellij Idea, from the same JetBrains family, PyCharm worked perfectly for me. I found the refactoring, debugging tools very familiar and useful.

However, I also had to learn Jupyter Lab since most of the tutorials use it. Also, it has some useful features like in-place graph view and audio play. Its ability to retain states is also useful. For example, if you have any line at the beginning of your code that takes a long time to execute (maybe downloading a big file), every time you run the whole program will execute the line, which is unproductive.

In Jupyter Lab, you can run that line as a cell and can experiment with the output of the code in later parts, without executing the same code again and again. So, in short, familiarity in both will be helpful. If you still want to pick one, go with Jupyter Lab (maybe I also overanalysed this part).

Framework: PyTorch

This is what I picked and worked perfectly for me. TensorFlow or other competitors might as well be just as fine. In my limited search, I found many places where PyTorch has been claimed to be a kind of de-facto standard. Maybe I missed the ones which claim the same for TensorFlow.

Python Version: The latest one supported by PyTorch

At the time of writing the article, the latest version of Python is 3.9, but without causing any issue, PyTorch supports up to 3.8. So, I am using 3.8 at this moment.

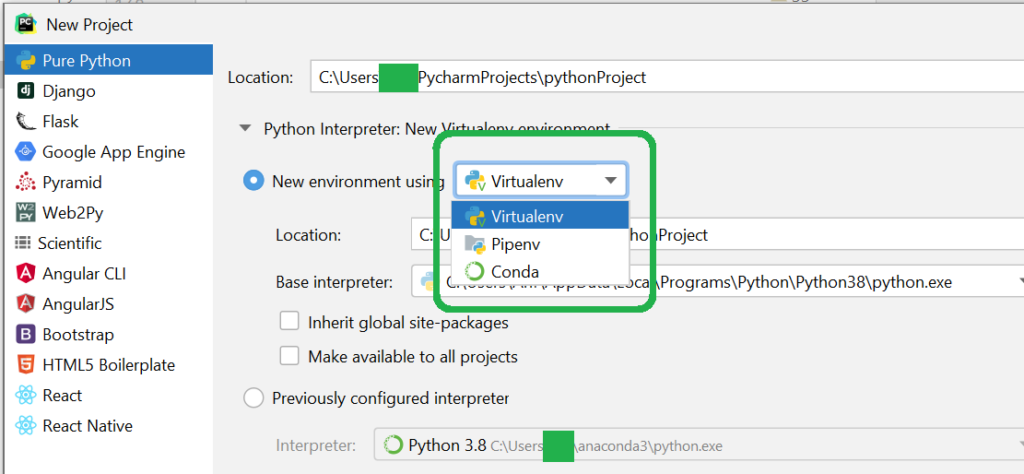

Virtual Environment: Virtualenv

If you are new to Python, you will find that you will be suggested to create a new virtual environment for a new project. Commonly popular tools for it: virtualenv, pipenv, conda. Till now, I have been using virtualenv, and it is working fine for me.

Exercises

I followed:

1. “Hello World”s: Spent a day learning best Pythonic practices and features which were new to me (like Generators, List Comprehension, Currying, Partial application). If you have a sound familiarity with any other language, feel free to go ahead without a complete understanding of all of them. You will be learning them as you go.

2. Numpy: Spent a few hours in NumPy — understanding how it differs from native python arrays. Also, explored different ways to initiate variables and the shorthand operations to manipulate matrices.

3. Implement Simple Neural Network from Scratch for MNIST dataset: Followed this book to implement a neural network in Python. While the book is not available for free, you can follow the videos from the author on the same topic. Also, the codes are available in Github.

4. Implement Convolutional Neural Network (CNN) from Scratch for MNIST dataset: Well, you can jump to PyTorch after implementing a simple neural network from scratch. But if you are learning deep learning for academic purposes, I will strongly suggest you implement CNN from scratch as well, including the backpropagation part.

5. Implement other types of networks on PyTorch: Now is the time you can start playing with CNN, RNN, LSTM, etc. in PyTorch. While you don’t have to write backpropagation methods (PyTorch does it for you!), don’t forget to study the theoretical background and intuitions behind those.

If you have got up to this point or even half of it, you can find your own path and your own set of tools, which might be different from mine. Now you are ready to criticize how wrong, short-sighted, incomplete, and biased I have been in this article.

You read a lot. We like that

Want to take your online business to the next level? Get the tips and insights that matter.